no title

I don’t even like hardstyle that much, but I love the (cheesy?) grandeur and spectacle of hardstyle festivals.

Though hardstyle itself has some nostalgic appeal for SNES battle music.

Thoughts in word form

I don’t even like hardstyle that much, but I love the (cheesy?) grandeur and spectacle of hardstyle festivals.

Though hardstyle itself has some nostalgic appeal for SNES battle music.

Game of Thrones SPOILER ALERT

The finale to Game of Thrones Season 6 was fantastic. We got a lot of things we’ve been waiting for this season (death of the High Sparrow), things we’ve been waiting for all series (renewed Targaryen army/navy/air force), and things we’ve had a hunch about but no conclusive evidence (R+L=J).

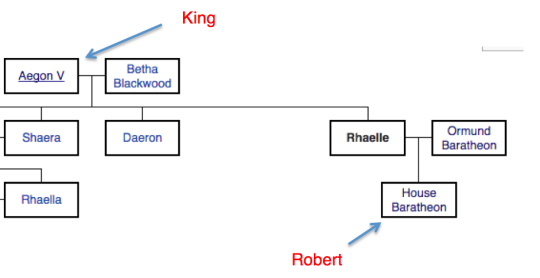

But one thing that bothered me, as a long-time Crusader Kings 2 player, is that Cersei really has no legal claim on the throne. Robert had a claim from being a descendent of Orys, a Targaryen bastard (albeit a weak one), and from being the grandson of Rhaelle Targaryen, daughter of King Aegon V (a fairly strong claim). This claim was realized after Robert’s Rebellion and his children, legitimate or otherwise, inherit that claim.

With the events of season 6, there are no legitimate children left. This leaves his bastards, as children of the late king, with arguably the strongest claim to the throne in Westeros. Daenerys is also the child of a late (mad) king and could arguably have a stronger claim, since she is a legitimate daughter. Jon Snow is next in line as the grandson of the mad king, albeit a bastard.

Beyond that, it gets hazy since there aren’t any Targaryens left. The direct descendent of Orys Baratheon would probably have to be next in line. Or anyone who can prove Targaryen blood (a statement that’s probably bad for a Westerosi’s livelihood).

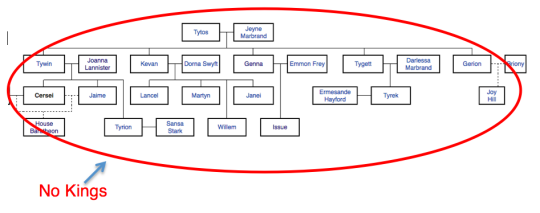

Cersei is not a descendent of any King of the Iron Throne. She married one, but that doesn’t give her a legal claim. Conquest is certainly a viable way of taking a title, but she just can’t expect people to just fall in line without a legal battle, especially if her recently re-inhereted and wildfire-adverse brother doesn’t back her with the Lannister army.

This is all under the assumption that my Crusader Kings 2 knowledge translates to Westerosi law. The Game of Thrones mod for CK2 is seriously good and a significant recent time sink of mine.

I’ve had a hard time finding a simple and useful to-do list web service (probably because of my own pickiness, not because there aren’t good options) and wanted to build my own. I started a Chrome New Tab extension and just put in some placeholder text that motivates me every time I open a new tab now.